The Governance Gap: Why Your Healthcare AI Needs an Operating System, Not Just a Checklist

1. Executive Summary

As artificial intelligence transitions from experimental innovation to safety-critical infrastructure, the healthcare industry faces a stark reality: over 950 AI-enabled medical devices are FDA-authorized , yet most clinical organizations only have the manual capacity to govern 3 to 5 systems effectively. This "Adoption Gap" creates a significant liability where "silent failures"—such as algorithmic drift caused by subtle changes in lab equipment—can compromise patient safety and invalidate regulatory approvals.

This piece argues that governance capacity—not model performance—is now the primary determinant of safe, effective, and trustworthy healthcare AI. To bridge the gap between regulatory ambitions like the EU AI Act and current organizational readiness , we propose an "AI Governance OS". By moving beyond one-time compliance toward a continuous, automated organizational capability, healthcare leaders can manage the "Many Hands" problem of diffused accountability while maintaining strict data privacy through non-invasive, signal-based monitoring.

2. The Problem: The Healthcare AI Governance Crisis

· The Regulatory Bottleneck: The volume of AI/ML-enabled medical devices has grown dramatically, with over 950 authorized as of late 2024 (FDA).

· The Adoption Gap: While 1,000+ devices are approved, most hospitals only have the manual capacity to govern 3–5 systems effectively.

· Universal High-Risk: Under the EU AI Act, most healthcare AI is classified as "High Risk," requiring rigorous, continuous oversight.

· Safety-Critical "Silent" Failures: A "silent change" in a healthcare model isn't just a technical bug; it's a liability that can invalidate prior regulatory approvals and compromise patient safety.

To move from these systemic risks to a practical understanding of how they manifest in a clinical setting, we can examine the specific lifecycle of a diagnostic tool.

3. Case Study: The "PulseGuard AI" Sepsis Scenario

To illustrate the need for continuous oversight, consider PulseGuard AI—a representative scenario developed for this analysis to illustrate governance principles. While this does not represent a specific past or current project within the ESN portfolio, it serves as a critical model for understanding the lifecycle of a sepsis early-warning system.

· The Lifecycle Gate: PulseGuard is authorized via an FDA PCCP (Predetermined Change Control Plan) to update its sensitivity as it gathers more data.

· The Failure: A hospital updates its lab equipment, subtly changing how "Lactate" levels are reported. PulseGuard begins to "drift," missing critical sepsis cases.

· The Governance Solution: An RCG (Regulatory, Compliance & Governance) platform acts as the "Check Engine Light," detecting this drift against pre-approved PCCP boundaries and alerting the Clinical Governance Committee before patient harm occurs.

While the PulseGuard scenario highlights a single point of failure, preventing such 'silent changes' across an entire health system requires a formalized map of the entire AI lifecycle and the people who manage it.

4. Lifecycle-Based Governance & The "Many Hands" Problem

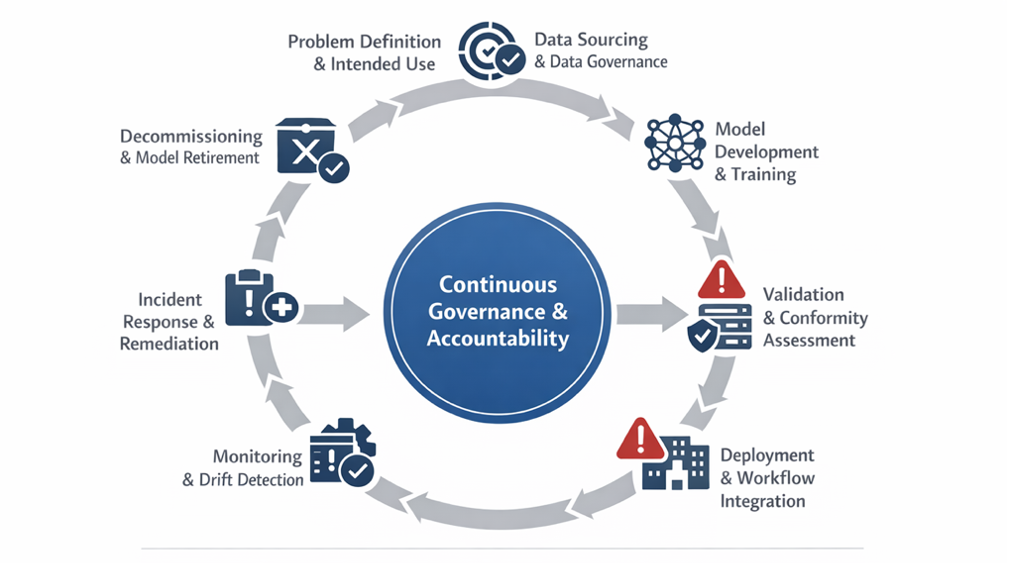

AI governance failures occur most frequently at lifecycle transitions—specifically from validation to deployment—rather than within individual stages.

Source: Adapted from lifecycle governance models in the EU AI Act, FDA SaMD, and NIST AI RMF.

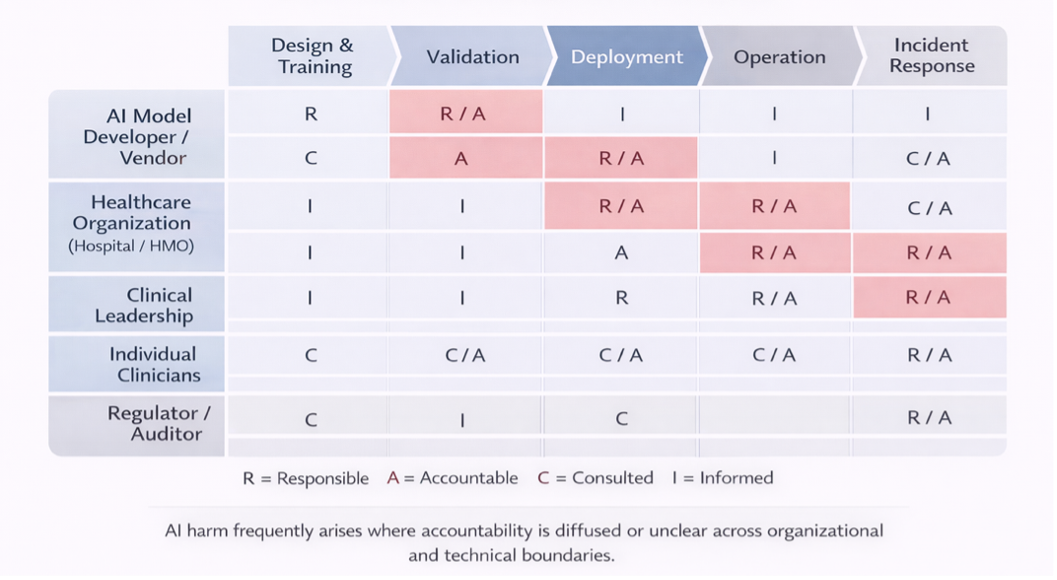

Responsibility for AI outcomes is often diffused across developers, deployers, clinicians, and vendors. Effective governance requires explicit accountability mapping to prevent the "Many Hands" problem.

Source: Red boxes denote zones of elevated accountability risk caused by overlapping or weakly operationalized responsibility.

Defining accountability ensures we know who is responsible when a system falters, but these stakeholders cannot act without the technical tools to oversee performance while strictly maintaining data privacy

5. The Technical Moat: Privacy-Preserving Governance

Traditional governance often hits a "Privacy Deadlock" where data protection requirements restrict auditing. Our approach utilizes:

· Synthetic Data Simulation: Testing AI for bias and safety without requiring access to proprietary code or sensitive demographic data.

· Non-Invasive Architecture: A cloud-based SaaS that connects to existing tech stacks via APIs to monitor performance "signals" rather than raw data.

· Automated Copilot: Built-in mappings for 15+ global frameworks (EU AI Act, NIST AI RMF, ISO 42001).

By solving these technical and privacy constraints, this governance framework becomes a scalable asset not just for hospitals, but for any stakeholder in the broader medical ecosystem facing high-stakes regulatory pressure.

6. Market Strategy: Beyond the Hospital

· Pharma & Life Sciences: Ensuring Trial Integrity. Beyond hospital walls, the "AI Governance OS" is critical for clinical development. We focus on automating PCCP boundary monitoring for AI-driven clinical trial matching. By ensuring that recruitment algorithms stay within pre-approved "Change Envelopes," sponsors can maintain the statistical validity of their trials without manual, resource-heavy auditing.

· Medical Device SMEs Scaling Regulatory Excellence: For startups and SMEs, global compliance is often a barrier to entry rather than a milestone. We provide a "Regulatory-as-a-Service" model that allows smaller teams to meet the rigorous standards of the EU AI Act and FDA SaMD without the overhead of a massive internal compliance department. This levels the playing field, allowing innovation to reach patients faster while maintaining the highest safety standards.

Whether in a startup or a global pharmaceutical firm, the goal remains the same: transforming regulatory burden into a transparent, automated, and safety-first operating system.

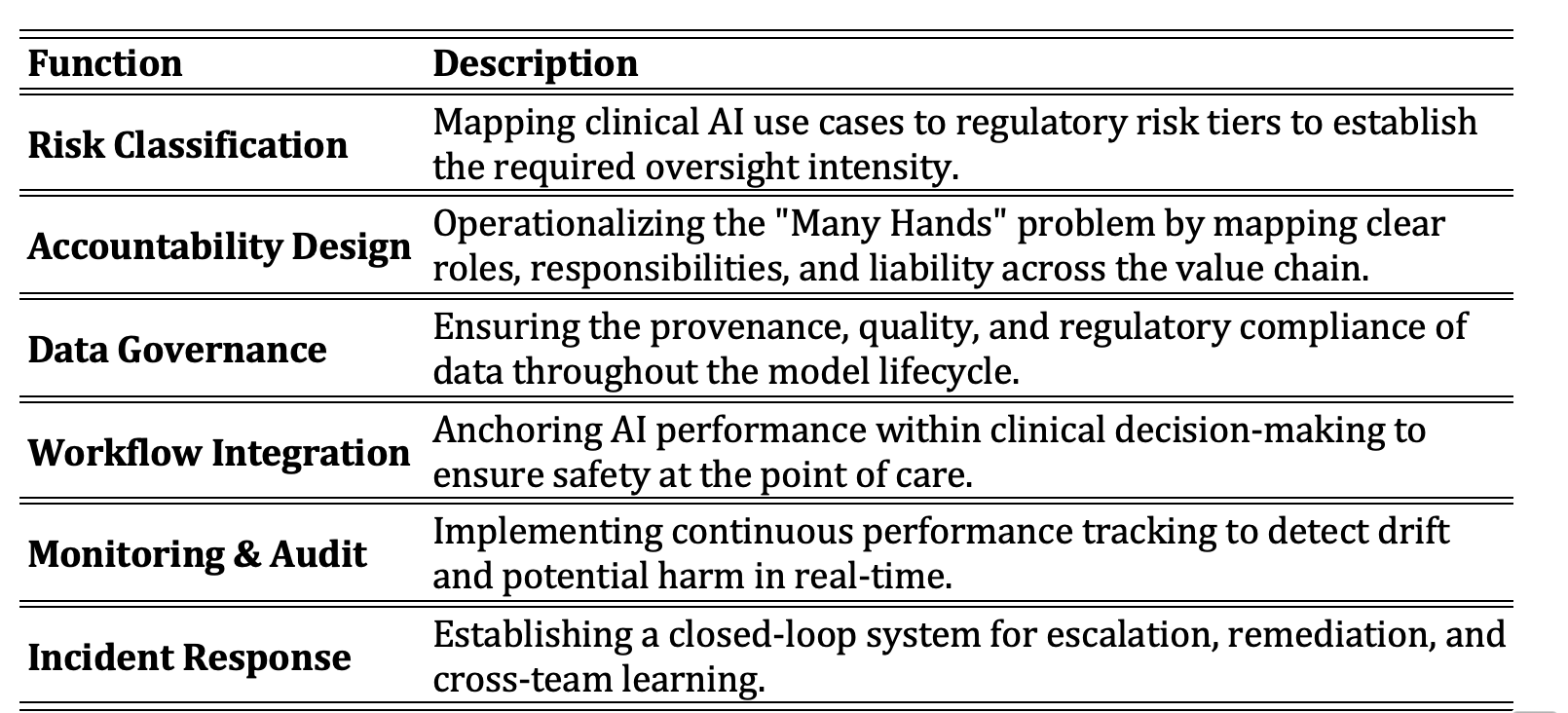

7. Core AI Governance Functions

8. Verified References

· European Commission (2024):EU Artificial Intelligence Act. Establishes lifecycle obligations.

· U.S. FDA (2022–2023):Software as a Medical Device (SaMD) Guidance. Evidence that failures occur post-deployment.

· NIST (2023):AI Risk Management Framework (AI RMF 1.0). govern–map–measure–manage lifecycle model.

· FDA Database (2024):AI/ML-Enabled Medical Devices.